How to Modernize Community Search with Hybrid Retrieval and Automated Evaluation

Introduction

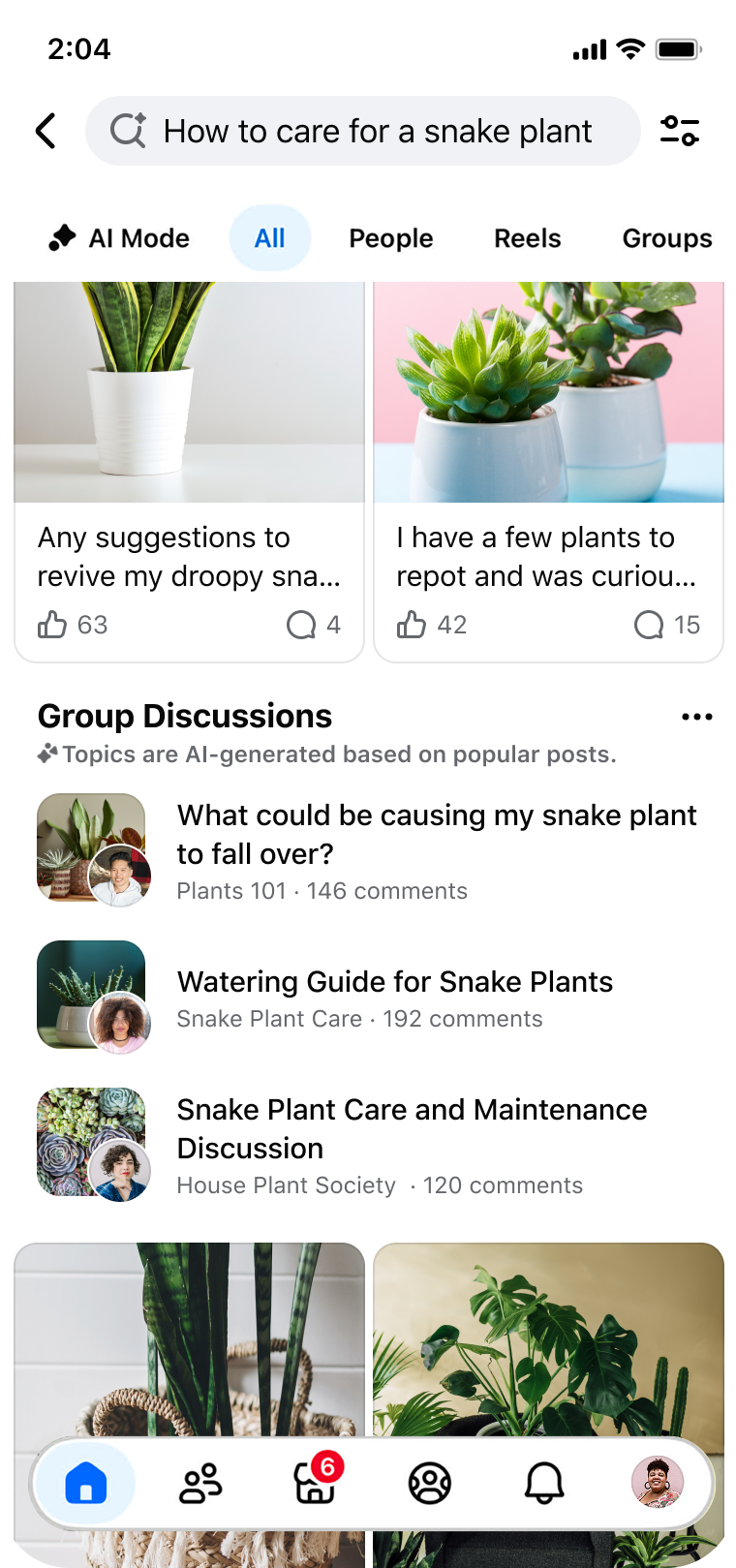

Communities thrive on shared knowledge, but finding the right information in a sea of conversations can be frustrating. Whether you're a platform developer or a community manager, you can transform how users discover, consume, and validate community content. This step-by-step guide draws on Facebook's approach to modernizing Groups Search: moving beyond keyword matching to a hybrid retrieval architecture and automated model-based evaluation. Follow these steps to unlock the power of community knowledge for your users.

What You Need

- Access to a community platform with search functionality (e.g., forums, groups, or social networks)

- Basic understanding of information retrieval systems (lexical and semantic)

- Team with machine learning and software engineering expertise

- Dataset of queries and relevant community posts for evaluation

- Infrastructure for deploying and monitoring models (cloud or on-premises)

- Clear metrics for search engagement (click-through rates, relevance scores)

Step-by-Step Guide

Step 1: Diagnose Friction Points in Community Search

Start by identifying the three main friction points users face: discovery, consumption, and validation.

- Discovery: Users struggle when their natural language queries don't match exact keywords. For example, a search for “small individual cakes with frosting” might miss posts about “cupcakes.”

- Consumption: Even when users find relevant threads, they must scroll through many comments to extract consensus—this “effort tax” discourages deep engagement.

- Validation: Users often need to verify decisions (e.g., buying a vintage car) using community expertise scattered across discussions, which is hard to aggregate.

Gather user feedback and analyze search logs to quantify these issues. For instance, measure zero-result rates for common queries or track how often users click beyond the first page.

Step 2: Adopt a Hybrid Retrieval Architecture

Traditional keyword-based (lexical) systems fail to capture semantic intent. Replace or augment them with a hybrid approach that combines lexical matching and semantic understanding.

- Lexical component: Use BM25 or similar algorithms to match exact terms—good for product names or jargon.

- Semantic component: Employ embeddings (e.g., from BERT or Sentence-BERT) to represent queries and posts in a dense vector space, capturing meaning beyond keywords. For example, “Italian coffee drink” matches “cappuccino” even if “coffee” is absent.

- Fusion strategy: Combine results from both components using a weighted score or a learned ranking model. This ensures high recall (lexical) and high precision (semantic).

Test the hybrid system on your dataset. In Facebook's case, this “fundamentally transformed” search by reducing missed relevant content.

Step 3: Implement Automated Model-Based Evaluation

Manual evaluation of search relevance is slow and inconsistent. Automate it with models that compare search results against ground-truth ratings.

- Collect ground truth: Have annotators rate query-document pairs for relevance (e.g., on a 0-3 scale). Cover diverse scenarios like synonyms, paraphrases, and ambiguous queries.

- Train an evaluation model: Use a regression or classification model (e.g., based on cross-encoders) to predict relevance scores. This model should mimic human judgment.

- Run automated evaluations: For each system change, the model scores all query-document pairs in your test set. Track metrics like Mean Reciprocal Rank (MRR) or Normalized Discounted Cumulative Gain (NDCG).

- Monitor error rates: Facebook emphasized “no increase in error rates.” Ensure your automated evaluation flags regressions in accuracy, false positives, or biases.

This evaluation loop allows rapid iteration without relying on human judges for every experiment.

Step 4: Validate Improvements in Engagement and Relevance

Deploy the new search system to a subset of users (A/B test) and compare key metrics against the baseline.

- Engagement: Measure click-through rates, time spent on results, and query reformulation rates. Lower reformulation suggests better first results.

- Relevance: Use explicit feedback (thumbs up/down) or implicit signals (dwell time, subsequent bounce).

- Consumption: Track how often users find answers within the first few results—reducing the “effort tax.”

- Validation: Survey users on whether search helped them make decisions (e.g., purchases).

Facebook reported “tangible improvements in search engagement and relevance” with no error increase. Repeat steps as needed, using automated evaluation to guide refinements.

Tips for Success

- Start small: Focus on one community or topic domain first to prove the concept before scaling.

- Iterate on query understanding: Use synonyms and query expansion alongside embeddings to handle variations.

- Balance speed and quality: Semantic retrieval can be slower—cache frequent queries or use approximate nearest neighbor (ANN) indexes.

- Involve the community: Let users flag irrelevant results to continuously improve ground truth.

- Publish your findings: Like Facebook's paper, sharing your methodology fosters trust and collaboration.

- Monitor for bias: Ensure your evaluation model doesn't favor popular content over niche expertise.

By following these steps, you can diagnose friction, redesign your search architecture, automate evaluation, and validate real-world impact—turning community knowledge into an easily accessible resource.

Related Articles

- 8 Key Insights Into OnePlus's Merger With Realme and What It Means for the Brand's Future

- Lenovo Unveils Next-Gen ThinkPad X13, L-Series, and ThinkStation P4: Enterprise Performance and Design Redefined

- Rethinking System Tool UX: A Guide to Transforming Utility Software from Chore to Delight

- How Spotify's Multi-Agent System Revolutionizes Ad Delivery

- How to Redesign System Tools Users Will Love (Not Just Tolerate)

- 10 Ways Facebook Groups Search Is Revolutionizing Community Knowledge Discovery

- 10 Essential Steps to Bridge Your Federated Social Media Accounts

- 10 Critical Insights from Fivetran's CPO: Why Closed Data Stacks Fail in the Agent Era