Decoding Your 2025 Wrapped: 10 Tech Secrets Behind the Magic

Every December, millions of Spotify users eagerly tap into their personalized Wrapped experience—a vivid, data-driven journey through their year in music and podcasts. But what powers these delightful summaries? Behind the scenes, a sophisticated tapestry of machine learning, massive data pipelines, and creative storytelling algorithms work in concert. In this article, we unveil the technical wizardry that transforms raw listening logs into your unique 2025 Wrapped highlights. From audio analysis to narrative generation, here are 10 things you need to know about the technology that makes your year-end recap possible.

1. Real-Time Stream Processing at Planetary Scale

Your every stream—whether you hit play for 5 seconds or listened to a full album—becomes a data point. To build Wrapped, Spotify ingests billions of events per day using a custom-built stream processing framework. This system, built on top of Apache Kafka and in-house technologies, ensures that even as you listen during a subway ride or offline, your actions are aggregated, deduplicated, and timestamped. The result is a near-real-time view of global listening habits, which then feeds into the personalization engine that curates your top songs, artists, and genres. Without this resilient pipeline, the 2025 Wrapped would be nothing more than a static snapshot.

2. Deep Audio Embeddings for Taste Discovery

How does Spotify know that your favorite song of the year is indeed 'yours'? It goes beyond simple play counts. The company employs deep neural networks that convert audio waveforms into high-dimensional embeddings. These embeddings capture not only the literal sound but also emotional and contextual cues—mood, tempo, energy, even the likelihood of being a 'morning song.' By comparing embeddings across your listening history, the system can identify breakout tracks that define your year, even if you only listened to them a handful of times. This is how Wrapped highlights that obscure indie gem you discovered in March.

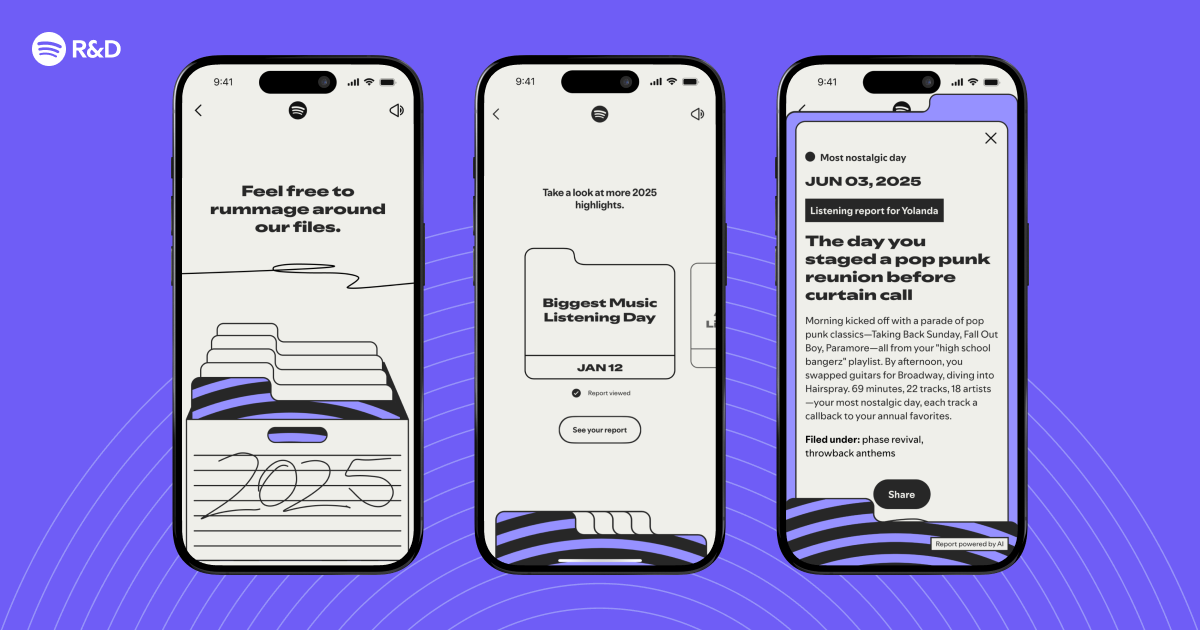

3. Natural Language Generation for Personalized Narratives

Each Wrapped card tells a micro-story: "You revisited the 80s this fall" or "Your peak listening hour was 3 AM." These narratives are crafted using a combination of rule-based templates and large language models (LLMs). Natural language generation (NLG) algorithms parse your listening patterns—such as seasonal shifts, repeat sessions, and genre fluidity—and select from hundreds of story arcs. In 2025, Spotify integrated a custom LLM fine-tuned on music journalism, adding warmth and surprise to the text. The result: a narrative that feels written just for you, not a robotic list of stats.

4. Collaborative Filtering for Genre and Mood Graphs

Your Wrapped includes visual clusters of genres and moods—how are these constructed? Behind the scenes, Spotify uses collaborative filtering, the same technique powering its recommendation engine. By analyzing the listening habits of millions, it creates a 'genre-mood' graph where similar tracks are closely connected. Your personal listening history then maps onto this graph, revealing patterns like "you traveled from synthwave to lo-fi" or "your year was a spiral of jazz and hip-hop." This graph is computed using matrix factorization and updated weekly, ensuring your 2025 Wrapped reflects your most recent obsessions.

5. Anomaly Detection for 'Interesting Moments'

The original prompt asked: "What if we could identify interesting listening moments?" Exactly that. Spotify employs unsupervised anomaly detection algorithms (isolation forests, autoencoders) to spot deviations in your behavior: a sudden spike in a new genre, a forgotten playlist revisited, a day you listened to the same song 50 times. These anomalies are flagged and scored, then passed to the narrative engine. For 2025 Wrapped, the system also considers temporal context—like whether the anomaly coincides with a holiday or global event—to make the story more relatable.

6. Privacy-Preserving Aggregation with Differential Privacy

While Wrapped is deeply personal, it also respects your privacy. Spotify applies differential privacy techniques to all aggregate statistics shown in Wrapped—like the total minutes you listened compared to others. This involves adding carefully calibrated noise to the data before it ever reaches your display. In 2025, the company upgraded its privacy framework to ensure that even if someone guesses your listening habits, they cannot infer them from public Wrapped cards. This balance of personalization and anonymity is crucial for user trust.

7. Mobile-First Rendering with GPU-Accelerated Graphics

The visual spectacle of Wrapped—animations, color transitions, interactive charts—runs smoothly on billions of devices. Spotify leverages GPU acceleration via its custom rendering engine, which pre-computes complex visual effects like particle systems for genre clouds and morphing album art. For 2025, the team adopted a reactive framework that prioritizes rendering based on device capabilities, ensuring even older phones get a crisp experience. The result is a Wrapped that feels like a mini movie, not a static report.

8. Eventual Consistency for Offline and Intermittent Connectivity

Not everyone has constant internet. Spotify’s Wrapped system must handle users who listen offline or in areas with spotty coverage. Instead of requiring live sync, the platform uses an eventual consistency model: your listening data is stored locally and synced in batches when connectivity returns. For 2025, the team introduced a conflict resolution algorithm that merges duplicate entries and cleans timestamps, so your offline road trip playlists are accurately reflected. This ensures that no listening moment—even in a tunnel—is lost.

9. A/B Testing and Personalization Mosaics

How does Spotify decide which Wrapped story to show you first? Through massive A/B testing. The engineering team runs hundreds of variants each year, testing everything from headline wording to color schemes. For 2025, they introduced 'personalization mosaics'—dynamically assembled grids of your top tracks and episodes that adapt based on your engagement history. Machine learning models predict which mosaic layout will maximize delight and sharing, using reinforcement learning trained on previous years' user feedback. Every swipe on Wrapped is thus an optimized experience.

10. The Human Touch: Editorial Oversight and Curators

Despite all the algorithms, the final Wrapped is reviewed by human curators. Editors from Spotify’s music and podcast teams manually verify the top charts for major markets, ensuring no bizarre glitches (like a 10-second silence as your top track). For 2025, a new 'cultural relevance' layer was added: if an artist or podcast had a significant global moment (e.g., a viral TikTok trend), the system elevates its visibility in Wrapped narratives. This blend of cold data and warm human judgment prevents Wrapped from feeling too machine-generated.

From stream processing to human curation, your 2025 Wrapped is a marvel of engineering and storytelling. As Spotify continues to evolve, expect future Wrapped experiences to become even more interactive—perhaps incorporating your own voice reactions or live listening parties. For now, enjoy the magic, and remember: every beat, every skip, every playlist repeat played a part in creating your unique audio story.

Related Articles

- How to Integrate Agentic Development into Your Workflow: Insights from Spotify and Anthropic

- Chainsaw Man: Rez Arc and Pixar's Hoppers Headline This Weekend's Streaming Releases

- How to Snag the Best Apple Deals on MacBooks, Watches & Cables

- Building Rock-Solid UIs for Real-Time Streaming Content

- Rare Apple Watch Series 11 Discounts Hit All-Time Lows, M5 MacBook Air and AirPods Pro 3 Also Sharply Reduced

- How to Build Rock-Solid Streaming Interfaces That Don’t Fight the User

- Spotify and Anthropic Unveil Agentic Development: A New Era for Software Engineering

- Agentic Development Redefined: A Deep Dive with Spotify and Anthropic